-

Syria's Kurds register for citizenship after decades of marginalisation

Syria's Kurds register for citizenship after decades of marginalisation

-

'There's more truth than fiction,' Spielberg says of 'Disclosure Day'

-

Strikes kill three in Ukraine, two in Russia, including children

Strikes kill three in Ukraine, two in Russia, including children

-

Trump turmoil sees Spain's Sanchez emerge as progressive star

-

Pope to visit Cameroon conflict zone under high security

Pope to visit Cameroon conflict zone under high security

-

Luxury giant Kering to chart path for Gucci turnaround

-

Sixers top Magic to book NBA playoff clash with Celtics

Sixers top Magic to book NBA playoff clash with Celtics

-

Tokyo record leads Asia stocks higher as Iran peace hopes grow

-

India's 'Maharaja in Denims' stakes claim in AI film race

India's 'Maharaja in Denims' stakes claim in AI film race

-

Russia rains strikes across Ukraine, killing three

-

US ex-Marine loses extradition appeal in China pilots case

US ex-Marine loses extradition appeal in China pilots case

-

Waratahs primed for physical Moana clash in front of Prince Harry

-

LIV Golf reassures players over Saudi withdrawal rumors

LIV Golf reassures players over Saudi withdrawal rumors

-

Much-hyped Alzheimer's drugs do not help patients, review finds

-

Mexican farmers raise alarm over Sheinbaum's fracking proposal

Mexican farmers raise alarm over Sheinbaum's fracking proposal

-

Brumbies gets Wright boost for Drua Super Rugby clash

-

Fuel supply fears after blaze tears through crucial Australian refinery

Fuel supply fears after blaze tears through crucial Australian refinery

-

Trump's triumphal arch gets official name

-

Australia to boost defence spending citing growing threats

Australia to boost defence spending citing growing threats

-

Left-winger Sanchez climbs to second place in Peru vote count

-

YouTube suspends pro-Iran channel posting Lego-style clips mocking Trump

YouTube suspends pro-Iran channel posting Lego-style clips mocking Trump

-

US announces new sanctions against Iran oil sector

-

Longtime Messi friend Hoyos unveiled as Inter Miami coach

Longtime Messi friend Hoyos unveiled as Inter Miami coach

-

US optimistic about reaching peace deal with Iran

-

Kane lauds Diaz 'moment of magic' after Bayern knock out Real

Kane lauds Diaz 'moment of magic' after Bayern knock out Real

-

'Beef' tackles generational conflicts in season 2: creator

-

'Beef 2' tackles generational conflicts in second season: creator

'Beef 2' tackles generational conflicts in second season: creator

-

WNBA star Wilson signs record contract as league booms

-

Arteta confident in Arsenal after anxious progress to Champions League semis

Arteta confident in Arsenal after anxious progress to Champions League semis

-

Real slam 'unbelievable' red card after Bayern defeat

-

Rice 'doesn't care' about Arsenal critics after reaching Champions League semis

Rice 'doesn't care' about Arsenal critics after reaching Champions League semis

-

Bayern sink Real Madrid late to reach Champions League semis

-

Arsenal survive tense Sporting stalemate to reach Champions League semis

Arsenal survive tense Sporting stalemate to reach Champions League semis

-

S&P 500, Nasdaq end at records as markets bet on US-Iran accord

-

Jury finds Ticketmaster owner ran illegal monopoly

Jury finds Ticketmaster owner ran illegal monopoly

-

US says optimistic about reaching peace deal with Iran

-

IMF and Argentina agree deal unlocking $1 bn in assistance

IMF and Argentina agree deal unlocking $1 bn in assistance

-

World Bank chief economist warns of hunger risk from war in Iran

-

France boss Deschamps confirms Ekitike to miss World Cup

France boss Deschamps confirms Ekitike to miss World Cup

-

Pope urges Cameroon's leaders to examine 'conscience'

-

'Fantastic feeling': Sudan capital returnees relieved after three years of war

'Fantastic feeling': Sudan capital returnees relieved after three years of war

-

France father who kept son in van faces 30 years in jail, says prosecutor

-

Pope urges Cameroon authorities to examine 'conscience'

Pope urges Cameroon authorities to examine 'conscience'

-

Bonjour! 'The White Lotus' starts filming season 4 in France: HBO

-

Impact sub Kohli shines as Bengaluru move top of IPL

Impact sub Kohli shines as Bengaluru move top of IPL

-

Donors pledge 1.5 bn euros as Sudan marks three years of war

-

BBC to cut up to 2,000 jobs under 'financial pressures'

BBC to cut up to 2,000 jobs under 'financial pressures'

-

Teenager kills nine, wounds 13 in Turkey school shooting

-

Hormuz shipping muted as US blockade takes hold: tracking data

Hormuz shipping muted as US blockade takes hold: tracking data

-

Swiss watchmakers say time will tell on effects of Mideast conflict

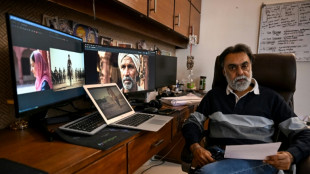

Generative AI's most prominent skeptic doubles down

Two and a half years since ChatGPT rocked the world, scientist and writer Gary Marcus still remains generative artificial intelligence's great skeptic, playing a counter-narrative to Silicon Valley's AI true believers.

Marcus became a prominent figure of the AI revolution in 2023, when he sat beside OpenAI chief Sam Altman at a Senate hearing in Washington as both men urged politicians to take the technology seriously and consider regulation.

Much has changed since then. Altman has abandoned his calls for caution, instead teaming up with Japan's SoftBank and funds in the Middle East to propel his company to sky-high valuations as he tries to make ChatGPT the next era-defining tech behemoth.

"Sam's not getting money anymore from the Silicon Valley establishment," and his seeking funding from abroad is a sign of "desperation," Marcus told AFP on the sidelines of the Web Summit in Vancouver, Canada.

Marcus's criticism centers on a fundamental belief: generative AI, the predictive technology that churns out seemingly human-level content, is simply too flawed to be transformative.

The large language models (LLMs) that power these capabilities are inherently broken, he argues, and will never deliver on Silicon Valley's grand promises.

"I'm skeptical of AI as it is currently practiced," he said. "I think AI could have tremendous value, but LLMs are not the way there. And I think the companies running it are not mostly the best people in the world."

His skepticism stands in stark contrast to the prevailing mood at the Web Summit, where most conversations among 15,000 attendees focused on generative AI's seemingly infinite promise.

Many believe humanity stands on the cusp of achieving super intelligence or artificial general intelligence (AGI) technology that could match and even surpass human capability.

That optimism has driven OpenAI's valuation to $300 billion, unprecedented levels for a startup, with billionaire Elon Musk's xAI racing to keep pace.

Yet for all the hype, the practical gains remain limited.

The technology excels mainly at coding assistance for programmers and text generation for office work. AI-created images, while often entertaining, serve primarily as memes or deepfakes, offering little obvious benefit to society or business.

Marcus, a longtime New York University professor, champions a fundamentally different approach to building AI -- one he believes might actually achieve human-level intelligence in ways that current generative AI never will.

"One consequence of going all-in on LLMs is that any alternative approach that might be better gets starved out," he explained.

This tunnel vision will "cause a delay in getting to AI that can help us beyond just coding -- a waste of resources."

- 'Right answers matter' -

Instead, Marcus advocates for neurosymbolic AI, an approach that attempts to rebuild human logic artificially rather than simply training computer models on vast datasets, as is done with ChatGPT and similar products like Google's Gemini or Anthropic's Claude.

He dismisses fears that generative AI will eliminate white-collar jobs, citing a simple reality: "There are too many white-collar jobs where getting the right answer actually matters."

This points to AI's most persistent problem: hallucinations, the technology's well-documented tendency to produce confident-sounding mistakes.

Even AI's strongest advocates acknowledge this flaw may be impossible to eliminate.

Marcus recalls a telling exchange from 2023 with LinkedIn founder Reid Hoffman, a Silicon Valley heavyweight: "He bet me any amount of money that hallucinations would go away in three months. I offered him $100,000 and he wouldn't take the bet."

Looking ahead, Marcus warns of a darker consequence once investors realize generative AI's limitations. Companies like OpenAI will inevitably monetize their most valuable asset: user data.

"The people who put in all this money will want their returns, and I think that's leading them toward surveillance," he said, pointing to Orwellian risks for society.

"They have all this private data, so they can sell that as a consolation prize."

Marcus acknowledges that generative AI will find useful applications in areas where occasional errors don't matter much.

"They're very useful for auto-complete on steroids: coding, brainstorming, and stuff like that," he said.

"But nobody's going to make much money off it because they're expensive to run, and everybody has the same product."

S.Abdullah--SF-PST