-

German investor morale lowest in over 3 years on Iran war fallout

German investor morale lowest in over 3 years on Iran war fallout

-

FedEx faces French 'genocide' complaint over Israel cargoes

-

No Iran delegation sent to US talks yet as truce expiry nears

No Iran delegation sent to US talks yet as truce expiry nears

-

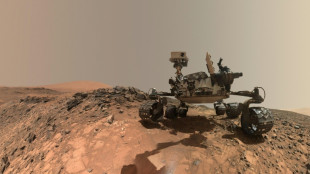

Rover discovers more building blocks of life on Mars

-

Russia, North Korea connect road bridge ahead of summer opening

Russia, North Korea connect road bridge ahead of summer opening

-

'Strangled': Pakistan faces economic imperative in Iran war peace push

-

Apple's Tim Cook to step down as CEO after 15-year run

Apple's Tim Cook to step down as CEO after 15-year run

-

Michael Jackson fans pack Hollywood for biopic premiere

-

Turkey arrests 110 coal miners on hunger strike

Turkey arrests 110 coal miners on hunger strike

-

Oil prices dip, stocks rise on lingering Iran peace hopes

-

Associated British Foods to spin off Primark clothes brand

Associated British Foods to spin off Primark clothes brand

-

Pope visits Eq. Guinea on last stop of Africa tour

-

Hello Kitty's parent company to make own video games

Hello Kitty's parent company to make own video games

-

Di Matteo says 'vital' for faltering Chelsea to add experience

-

Ex-Spurs star Davids condemns 'lack of quality, lack of management'

Ex-Spurs star Davids condemns 'lack of quality, lack of management'

-

Turkmenistan, the gas giant increasingly dependent on China

-

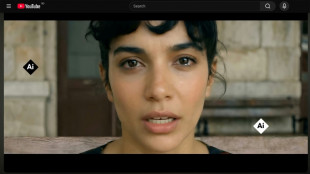

Romanian AI music sensation Lolita sparks racism debate

Romanian AI music sensation Lolita sparks racism debate

-

Timberwolves battle back to stun Nuggets in NBA playoffs

-

Eta appointment 'no surprise' for Union Berlin's ascendant women

Eta appointment 'no surprise' for Union Berlin's ascendant women

-

Democrats eye Virginia gains in war with Trump over US voting map

-

Tourists trickle back to Kashmir, one year after deadly attack

Tourists trickle back to Kashmir, one year after deadly attack

-

Inside the world of ultra-luxury wedding cakes

-

Chinese AI circuit board maker soars on Hong Kong debut

Chinese AI circuit board maker soars on Hong Kong debut

-

Oil prices dip, most stocks rise on lingering Iran peace hopes

-

Tim Cook's time as Apple chief marked by profit absent awe

Tim Cook's time as Apple chief marked by profit absent awe

-

Mitchell, Harden shine as Cavs down Raptors for 2-0 series lead

-

El Salvador's missing thousands buried by official indifference

El Salvador's missing thousands buried by official indifference

-

Trump's Fed chair pick to face lawmakers at key confirmation hearing

-

PGA Tour to scrap Hawaii opening events from 2027

PGA Tour to scrap Hawaii opening events from 2027

-

Amazon invests another $5 bn in Anthropic

-

Israel PM vows 'harsh action' against soldier vandalising Jesus statue in Lebanon

Israel PM vows 'harsh action' against soldier vandalising Jesus statue in Lebanon

-

New Report Reveals Widespread Misunderstanding of Consumer Messaging App Security Across Government and Critical Infrastructure

-

Wembanyama wins NBA defensive player of the year

Wembanyama wins NBA defensive player of the year

-

'The Devil Wears Prada 2' stars reunite for glamorous premiere

-

El Salvador holds mass trial of nearly 500 alleged gang members

El Salvador holds mass trial of nearly 500 alleged gang members

-

Apple's Tim Cook to step down as CEO in September

-

West Ham's draw at Palace relegates Wolves, piles pressure on Spurs

West Ham's draw at Palace relegates Wolves, piles pressure on Spurs

-

Canadian tourist killed in Mexico archaeological site shooting

-

Wolves relegated from Premier League

Wolves relegated from Premier League

-

Oil jumps on Hormuz tensions, stocks mostly retreat

-

Colombian environmental activist honored amid threats and exile

Colombian environmental activist honored amid threats and exile

-

Gun battle traps more than 200 tourists at Rio viewpoint

-

Alcaraz may skip French Open rather than rush injury comeback

Alcaraz may skip French Open rather than rush injury comeback

-

Top US court to hear case of Catholic schools excluded from state funding

-

Trump Fed chair pick to vow interest rate independence at key hearing

Trump Fed chair pick to vow interest rate independence at key hearing

-

EU to host Taliban officials for talks on deporting Afghans

-

Blue Origin probing rocket's failure to deliver satellite

Blue Origin probing rocket's failure to deliver satellite

-

Pope blasts 'exploitation' as he wraps up tour of Angola

-

Wembanyama 'changing the game as we speak', says Nowitzki

Wembanyama 'changing the game as we speak', says Nowitzki

-

Singer D4vd charged with murder after teen's body found in Tesla

Inbred, gibberish or just MAD? Warnings rise about AI models

When academic Jathan Sadowski reached for an analogy last year to describe how AI programs decay, he landed on the term "Habsburg AI".

The Habsburgs were one of Europe's most powerful royal houses, but entire sections of their family line collapsed after centuries of inbreeding.

Recent studies have shown how AI programs underpinning products like ChatGPT go through a similar collapse when they are repeatedly fed their own data.

"I think the term Habsburg AI has aged very well," Sadowski told AFP, saying his coinage had "only become more relevant for how we think about AI systems".

The ultimate concern is that AI-generated content could take over the web, which could in turn render chatbots and image generators useless and throw a trillion-dollar industry into a tailspin.

But other experts argue that the problem is overstated, or can be fixed.

And many companies are enthusiastic about using what they call synthetic data to train AI programs. This artificially generated data is used to augment or replace real-world data. It is cheaper than human-created content but more predictable.

"The open question for researchers and companies building AI systems is: how much synthetic data is too much," said Sadowski, lecturer in emerging technologies at Australia's Monash University.

- 'Mad cow disease' -

Training AI programs, known in the industry as large language models (LLMs), involves scraping vast quantities of text or images from the internet.

This information is broken into trillions of tiny machine-readable chunks, known as tokens.

When asked a question, a program like ChatGPT selects and assembles tokens in a way that its training data tells it is the most likely sequence to fit with the query.

But even the best AI tools generate falsehoods and nonsense, and critics have long expressed concern about what would happen if a model was fed on its own outputs.

In late July, a paper in the journal Nature titled "AI models collapse when trained on recursively generated data" proved a lightning rod for discussion.

The authors described how models quickly discarded rarer elements in their original dataset and, as Nature reported, outputs degenerated into "gibberish".

A week later, researchers from Rice and Stanford universities published a paper titled "Self-consuming generative models go MAD" that reached a similar conclusion.

They tested image-generating AI programs and showed that outputs become more generic and strafed with undesirable elements as they added AI-generated data to the underlying model.

They labelled model collapse "Model Autophagy Disorder" (MAD) and compared it to mad cow disease, a fatal illness caused by feeding the remnants of dead cows to other cows.

- 'Doomsday scenario' -

These researchers worry that AI-generated text, images and video are clearing the web of usable human-made data.

"One doomsday scenario is that if left uncontrolled for many generations, MAD could poison the data quality and diversity of the entire internet," one of the Rice University authors, Richard Baraniuk, said in a statement.

However, industry figures are unfazed.

Anthropic and Hugging Face, two leaders in the field who pride themselves on taking an ethical approach to the technology, both told AFP they used AI-generated data to fine-tune or filter their datasets.

Anton Lozhkov, machine learning engineer at Hugging Face, said the Nature paper gave an interesting theoretical perspective but its disaster scenario was not realistic.

"Training on multiple rounds of synthetic data is simply not done in reality," he said.

However, he said researchers were just as frustrated as everyone else with the state of the internet.

"A large part of the internet is trash," he said, adding that Hugging Face already made huge efforts to clean data -- sometimes jettisoning as much as 90 percent.

He hoped that web users would help clear up the internet by simply not engaging with generated content.

"I strongly believe that humans will see the effects and catch generated data way before models will," he said.

X.AbuJaber--SF-PST