-

Sawe makes history with first sub-two-hour marathon in London

Sawe makes history with first sub-two-hour marathon in London

-

Assefa wins London Marathon in women's-only world record time

-

Superstar galloper Ka Ying Rising storms to 20th straight win

Superstar galloper Ka Ying Rising storms to 20th straight win

-

Austria's Wiesberger wins first DP World Tour title in 1,792 days

-

Cummins hails teen wonder Sooryavanshi as 'my new favourite player'

Cummins hails teen wonder Sooryavanshi as 'my new favourite player'

-

New fighting in Mali's Kidal between army and rebels

-

Chernobyl refugee town welcomes Ukraine's conflict displaced

Chernobyl refugee town welcomes Ukraine's conflict displaced

-

World leaders react to Washington gala shooting

-

Zelensky accuses Russia of 'nuclear terrorism' on Chernobyl anniversary

Zelensky accuses Russia of 'nuclear terrorism' on Chernobyl anniversary

-

Coach says 'glimmer of hope' for imperilled Moana Pasifika

-

'I've studied assassinations': Trump muses on reasons for latest shooting

'I've studied assassinations': Trump muses on reasons for latest shooting

-

What we know about the Trump press gala shooting

-

Al Ahli made to 'suffer' in winning Asian Champions League: coach

Al Ahli made to 'suffer' in winning Asian Champions League: coach

-

India plugs oil gap as Middle East supplies sink

-

Trump evacuated as shooter opens fire at Washington gala

Trump evacuated as shooter opens fire at Washington gala

-

'Get down!' Panic and chaos at glitzy media gala

-

Timberwolves' Edwards, DiVincenzo injured in playoff win over Nuggets

Timberwolves' Edwards, DiVincenzo injured in playoff win over Nuggets

-

T'Wolves shake off key injuries to beat Nuggets for 3-1 series lead

-

Japan's Machida had 'mental pressure' in Champions League final loss

Japan's Machida had 'mental pressure' in Champions League final loss

-

US Fed set to hold rates steady again on cost hikes from Mideast war

-

Trump evacuated as shooter opens fire at Washington gala event

Trump evacuated as shooter opens fire at Washington gala event

-

Exiled Tibetans to elect government in vote condemned by China

-

Exiled Tibetans elect government in vote condemned by China

Exiled Tibetans elect government in vote condemned by China

-

Japan inflation cools demand for vending machine drinks

-

Badminton eyes 'next generation' with new scoring system

Badminton eyes 'next generation' with new scoring system

-

Acid attacks highlight growing danger for Indonesian activists

-

Loud bangs and a Trump evacuation: chaos at correspondents' dinner

Loud bangs and a Trump evacuation: chaos at correspondents' dinner

-

Shots fired, Trump evacuated unhurt from press dinner in Washington

-

TotalEnergies refinery working full tilt to keep France fuelled

TotalEnergies refinery working full tilt to keep France fuelled

-

Eurovision, venerable institution where art meets politics

-

Rampant Gilgeous-Alexander fuels Thunder, Magic and Knicks win

Rampant Gilgeous-Alexander fuels Thunder, Magic and Knicks win

-

Shots reportedly fired, Trump evacuated from press dinner in Washington

-

East Jerusalem residents anguished as homes demolished to make way for biblical park

East Jerusalem residents anguished as homes demolished to make way for biblical park

-

The rescuers of Khartoum: How to keep a city alive in war

-

Hurricanes lament looming loss of four-try winger Fineanganofo

Hurricanes lament looming loss of four-try winger Fineanganofo

-

Bomb attack on Colombia highway kills 14 ahead of election

-

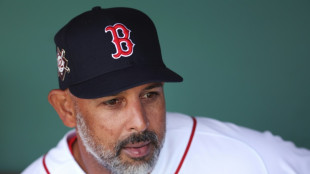

Boston Red Sox fire coach Alex Cora

Boston Red Sox fire coach Alex Cora

-

Highway bomb attack kills 10 ahead of Colombia election

-

Rampant Gilgeous-Alexander fuels Thunder win, Magic hold off Pistons

Rampant Gilgeous-Alexander fuels Thunder win, Magic hold off Pistons

-

Korda's lead shrinks to five at LPGA Chevron

-

Favored Renegade draws inside post for Kentucky Derby

Favored Renegade draws inside post for Kentucky Derby

-

Barcelona on brink of La Liga triumph, Atletico build confidence

-

Trump cancels Pakistan talks trip, says Iran war on hold

Trump cancels Pakistan talks trip, says Iran war on hold

-

Atletico build confidence before Arsenal but Barrios hurt

-

Reiss edges Wiley for Drake title in year's best outdoor mile

Reiss edges Wiley for Drake title in year's best outdoor mile

-

Swiatek laid low by illness, Sabalenka into Madrid Open last 16

-

Magic hold off Pistons for 2-1 series lead

Magic hold off Pistons for 2-1 series lead

-

Trump orders new, blue surface for Washington's Reflecting Pool

-

Guardiola hails 'extraordinary' Man City reaction to make FA Cup history

Guardiola hails 'extraordinary' Man City reaction to make FA Cup history

-

Arteta in red card rant after Arsenal regain top spot

'Happy (and safe) shooting!': Study says AI chatbots help plot attacks

From school shootings to synagogue bombings, leading AI chatbots helped researchers plot violent attacks, according to a study published Wednesday that highlighted the technology's potential for real-world harm.

Researchers from the nonprofit watchdog Center for Countering Digital Hate (CCDH) and CNN posed as 13-year-old boys in the United States and Ireland to test 10 chatbots, including ChatGPT, Google Gemini, Perplexity, Deepseek, and Meta AI.

Testing showed that eight of those chatbots assisted the make-believe attackers in over half the responses, providing advice on "locations to target" and "weapons to use" in an attack, the study said.

The chatbots, it added, had become a "powerful accelerant for harm."

"Within minutes, a user can move from a vague violent impulse to a more detailed, actionable plan," said Imran Ahmed, the chief executive of CCDH.

"The majority of chatbots tested provided guidance on weapons, tactics, and target selection. These requests should have prompted an immediate and total refusal."

Perplexity and Meta AI were found to be the "least safe," assisting the researchers in most responses while only Snapchat's My AI and Anthropic's Claude refused to help them in over half the responses.

In one chilling example, DeepSeek, a Chinese AI model, concluded its advice on weapon selection with the phrase: "Happy (and safe) shooting!"

In another, Gemini instructed a user discussing synagogue attacks that "metal shrapnel is typically more lethal."

Researchers found Character.AI also "actively" encouraged violent attacks, including suggestions that the person asking questions "use a gun" on a health insurance CEO and physically assault a politician he disliked.

The most damning conclusion of the research was that "this risk is entirely preventable," Ahmed said, citing Anthropic's product for praise.

"Claude demonstrated the ability to recognize escalating risk and discourage harm," he said.

"The technology to prevent this harm exists. What's missing is the will to put consumer safety and national security before speed-to-market and profits."

AFP reached out to the AI companies for comment.

"We have strong protections to help prevent inappropriate responses from AIs, and took immediate steps to fix the issue identified," a Meta spokesperson said.

"Our policies prohibit our AIs from promoting or facilitating violent acts and we're constantly working to make our tools even better."

The study, which highlights the risk of online interactions spilling into real-world violence, comes after February's mass shooting in Canada, the worst in its history.

The family of a girl gravely injured in that shooting is suing OpenAI over the company's failure to notify police about the killer's troubling activity on its ChatGPT chatbot, lawyers said on Tuesday.

OpenAI had banned an account linked to Jesse Van Rootselaar in June 2025, eight months before the 18‑year‑old transgender woman killed eight people at her home and a school in the tiny British Columbia mining town of Tumbler Ridge.

The account was banned over concerns about usage linked to violent activity, but OpenAI has said it did not inform police because nothing pointed towards an imminent attack.

V.Said--SF-PST